Gif by hamiltonmusical on Giphy

In this issue

⚡ 7 AI takes for city communicators

🤖 The room where it happens (and why you should be in it)

📱 The "neighbor test" for government technology

🎙 Kristy Dalton on AI and human judgment

✅ Follow me on LinkedIn for more

Oh, hi!

There is a scene in Hamilton where Aaron Burr sings about the one thing he wants more than anything: to be in the room where it happens. The room where the deals get made. The room where the decisions land. The room he keeps getting shut out of.

That is what is happening to comms teams right now with AI. Your city is making decisions about chatbots, automated responses, and resident-facing tools. And in most cities, the communicators find out after the tool is live and a resident screenshots something wrong.

This week is a lightning round. Seven quick takes on AI. And one theme: you need to be in the room where it happens.

⚡ Seven Quick Takes

1. The "wait and see" era is over

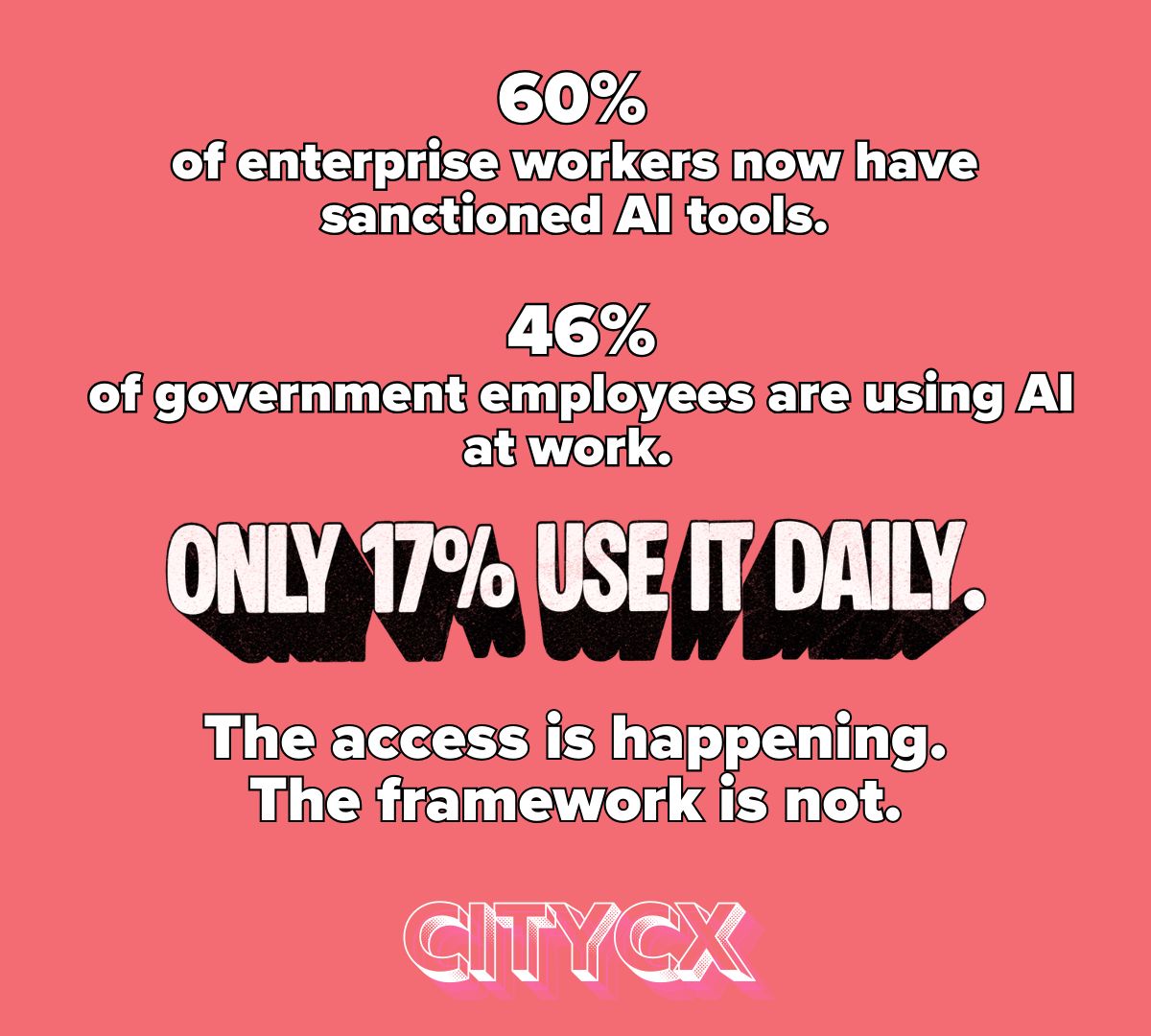

A 2025 MissionSquare survey of 2,000 public employees found that 46% are already using AI tools at work. Meanwhile, Deloitte's 2026 State of AI report found that 60% of enterprise workers now have sanctioned AI tools, up from fewer than 40% just one year ago. The private sector is not waiting. Your employees are not waiting. The gap between where everyone is headed and what cities are prioritizing is where the risk lives. Not in adopting AI too fast. In pretending you have more time than you do. I saw this same pattern play out with social media a decade ago. The cities that waited did not avoid risk. They just fell behind.

2. You need to be in the room where it happens

This is the hill I will stand on. The words your city uses are a promise. A chatbot that gives wrong information is not a tech failure. It is a trust failure. And trust is a communications problem. If your city is building an AI tool and the comms team finds out when a resident screenshots something wrong, you do not have an AI strategy. You have a PR crisis waiting to happen. Burr spent the whole show trying to get into the room. You do not have to wait to be invited. Walk in.

3. The Neighbor Test

Here is a simple framework for evaluating any government technology: does it act like a neighbor or like Big Brother? A good neighbor is helpful, knows their limits, and tells you when they are not sure. A good neighbor does not pretend to know things they do not know. A good neighbor checks their facts before giving you advice. That is what government AI should feel like. Bounded. Only sharing information you have verified. Transparent about what it can and cannot do. That should be the minimum standard for any AI tool your city deploys.

4. The data says AI works. But "works" is doing a lot of heavy lifting.

That same MissionSquare survey found that more than half of public employees reported improved productivity from AI, and over 60% said residents were satisfied. Those numbers are encouraging. But satisfied compared to what? If residents were previously on hold for 45 minutes and now a chatbot answers in 30 seconds, satisfaction goes up. That does not mean the chatbot gave the right answer. It means it gave a fast one. Satisfaction scores measure experience. They do not measure accuracy. Your city needs to measure both.

5. AI will replace some jobs. Pretending otherwise is not honest.

I am not going to sugarcoat this. When cities adopt AI tools that handle 20% of service requests, those efficiencies show up somewhere. Sometimes it is vacant positions that never get filled. Sometimes it is hours that get cut. Sometimes it is people. The comms team should be telling both sides of this story. Not just the press release about innovation. Also the honest conversation about what changes for the people who work there. Every person on your team matters. If AI replaces any of their roles, you should be the one having that conversation. Not the one reading about it in a council agenda.

6. The biggest risk is not adopting AI too fast. It is adopting it without a framework.

MissionSquare found that 45% of public employees cite data privacy as their top AI concern, and 37% worry about output reliability. Those are legitimate concerns. But the biggest risk is not the concerns people are raising. It is the absence of a framework for addressing them. There is no playbook. No one in the building has been asked to think about this deliberately. That is how you end up with a chatbot that pulls answers from the open internet instead of vetted city data. Not because anyone made a bad decision, but because no one made a deliberate one. Before you build anything, answer three questions: what do residents need, what do we know for certain, and what are we not willing to guess about?

7. Government is not an exception to how the world works

I say this all the time, and I will keep saying it: government is not an exception to how the world works. Amazon built its innovation culture around "two-pizza teams": small groups of ten or fewer people who own a product end-to-end. In government, AI decisions get made by IT, procurement reviews the contract, legal reviews the terms of service, and comms finds out last. That is not a workflow. That is a relay race where nobody passes the baton. The private sector figured out decades ago that the people closest to the customer need to be in the room when products get built. Your residents deserve the same.

This Part Really Matters

Technology is never the hard part. The hard part is making sure the technology says what you mean. AI can draft a response in seconds. It cannot tell you whether that response will make a resident trust you or lose faith in you. That judgment, knowing your community, reading the room, understanding what people actually need to hear, is the one thing a chatbot will never replace. And it is exactly what your comms team brings to the table. So be in the room. Not because you were invited. Because the work does not get done right without you.

Save this. Share it.

What You Can Do

Right now (15 minutes):

Ask one question: Walk over to your IT department (or send a Slack message) and ask: "Are we building or considering any AI tools right now?" If the answer is yes and you did not know, that is your signal.

This week (before Friday):

Request a seat at the table. Send an email to whoever leads digital or IT in your city: "I would like to be included in any conversations about AI-powered resident-facing tools. The comms team needs to review anything that talks to the public before it goes live." You do not need permission to ask. You need permission not to.

Plant the seed:

Forward this newsletter to your city manager with one line: "Can we talk about whether our comms team is involved in AI decisions? I want to make sure we are not building trust problems we will have to clean up later."

Kristy Dalton runs the Government Social Media Conference. She has trained thousands of city communicators. And she has a warning about AI-generated content that every PIO needs to hear.

The posts look perfect. The grammar is clean. The formatting is right. And they still miss.

"if you use AI to generate social media posts, it can look like great social media content, but it can also really not be great social media content. You need to have that eye for critically looking at this for is it going to resonate? Do I know my community?"

That is the question AI cannot answer for you. It can draft the post. It cannot tell you whether your residents will trust it.

Also worth hearing: Sherice Torres, former CMO of Google Wallet, on why the behavioral change hurdle for AI adoption mirrors what she saw with mobile payments: "It was a change in behavior people had paid with cash or credit cards for for their entire lives. As far back as we could remember in our lifetimes, in our parents' lifetimes, in our grandparents' lifetimes. So using something different, especially when it came to money, brought up fear." The same fear is slowing AI adoption in government. And the same answer applies: meet people where they are, and show them the tool works before you ask them to trust it.

What is the one AI question your city has not answered yet? Hit reply and tell me. I read every one.

Talk soon,

Dana

Was this forwarded to you? Subscribe to CityCX here.

📲 Between Newsletters

I post quick takes between newsletters. If you want to follow more of my work, follow me on LinkedIn.

About Dana

Former Emmy-winning television producer and Chief Digital Officer. Built Gilbert, AZ's national award-wining Office of Digital Government. Now helping city communicators tell stories that build trust.

Oh, hi! Stories Podcast: Spotify | Apple Podcasts

Trusted by city leaders, PIOs, and civic innovators across the country.

{{rp_refer_url}}